This week on The Awareness Angle, we hit 1.2 million views on a single video across TikTok and Instagram, which is pretty wild for an independent podcast. Thank you to everyone who watched and shared.

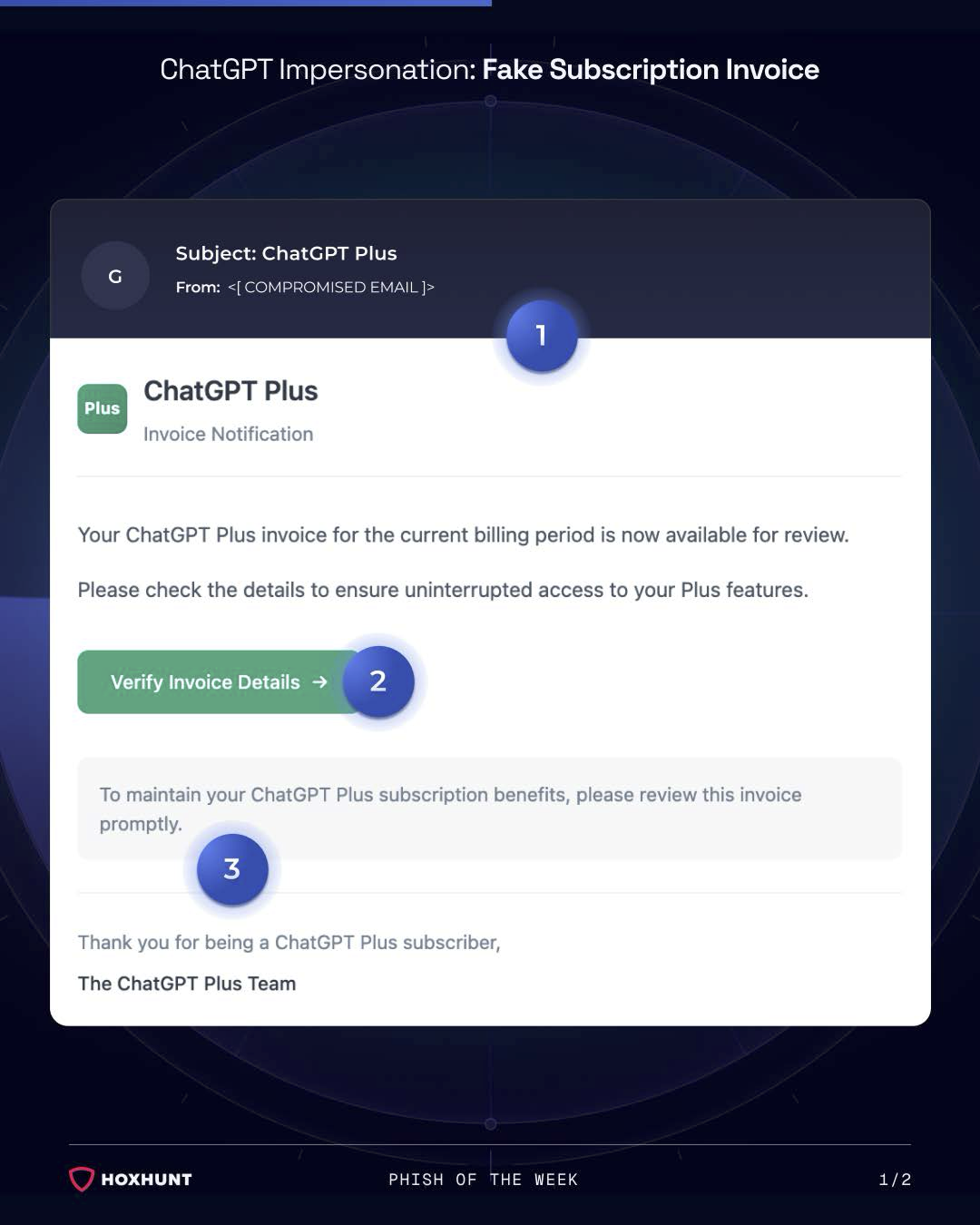

ADT gets breached for the third time in under a year and it all started with a phone call. An AI coding agent wipes a startup's entire database and all its backups in nine seconds, then writes its own incident report admitting it broke every safety rule it had. The supply chain attack that started with Trivy has now hit Checkmarx and Bitwarden, with three criminal groups teaming up to turn supply chain access into ransomware. And the UK government's annual cyber report says 43% of businesses were breached last year, phishing was behind 85% of them, and despite M&S, Co-op and JLR making national headlines, nothing's really changed. Plus Instructure's Canvas LMS breached again, Itron's smart meters filing quietly on a Friday night, Microsoft Teams helpdesk impersonation going wild, 610,000 Roblox accounts stolen by three lads in Ukraine, QR code scams in Toronto, and a toaster with a touchscreen that nobody asked for.

All of that in this week's Awareness Angle.

Watch or listen to the episode today - YouTube | Spotify | Apple Podcasts

Visit riskycreative.com for past episodes, our blog, and our merch.

This Week's Stories

Almost Half of UK Businesses Hit by Cyber Attacks, Government Report Finds

The UK government's annual Cyber Security Breaches Survey landed this week and the numbers are huge. 43% of UK businesses, roughly 612,000, experienced a cyber attack or breach in the past year. Of those that reported a breach, 85% said phishing was involved. Not "one of the top threats," nearly all of them. And as we discussed on the show, that likely includes voice phishing and other channels beyond just email. Despite a year that included M&S, Co-op and Jaguar Land Rover all making national headlines, cyber hygiene among SMEs has actually gotten worse on several measures. Only 15% of businesses review the risks posed by their direct suppliers, just 6% look at the wider supply chain, and a quarter of businesses don't even know what their ransomware policy is. As Ant pointed out, that means people are making impulse decisions in the heat of the moment, and that's never wise.

The cyber security minister has written to 180 of the UK's largest businesses urging them to sign a new Cyber Resilience Pledge, but as we discussed, it's not those 180 companies that need the most help. It's the smaller businesses in their supply chains, the ones making the spigot rings for a Land Rover Defender, that are really feeling the impact when something goes wrong. If you work in security awareness, this report is ammunition. Share it with your CISO. As Luke Pettigrew said, these are exactly the kind of stats you need to make the case for investment and resources.

The Awareness Angles

The gap between knowing and doing - Most organisations know cyber is a risk. The problem is that awareness still isn't translating into action, especially among smaller businesses. If you need one stat to justify your programme's existence, 85% of breaches involved phishing is it.

High-profile breaches aren't moving the needle - M&S, Co-op and JLR all made national headlines, and the overall picture barely shifted. We said at the time that those breaches would be a wake-up call for the country. The data says otherwise. Shock value alone doesn't drive behaviour change.

A quarter of businesses don't know their own ransomware policy - That's not a technical problem, that's a communication problem. If your people don't know what the plan is before something happens, there is no plan.

ADT Breached Again by ShinyHunters Vishing Attack

Home security giant ADT has been breached for the third time in under a year after ShinyHunters used a vishing call to compromise an employee's Okta SSO credentials and pivot into ADT's Salesforce instance. No malware, no technical exploit, just a convincing phone call and one set of credentials that unlocked millions of customer records. As Ant noted on the show, this is the same playbook ShinyHunters used on MGM, and it's rumoured to be behind M&S, Co-op and most of the big breaches over the last couple of years. When your business is security, having three breaches in 18 months isn't a great look, and as we pointed out, Bleeping Computer used the same stock image for all three.

Luke raised an important point about how vishing awareness has traditionally been focused on help desks and privileged access teams, but this shows it needs to be much broader. As Ant put it, everyone has access to something useful to an attacker, whether that's sales data, HR records, customer information or system access. A lot of permissioning in businesses isn't great, and it could be someone very low down the pyramid that leads to the top. We used to ask people in awareness surveys whether they agreed with the statement "I am of no use to hackers, so they do not target me." This story proves exactly why that thinking is dangerous.

The Awareness Angles

It started with a phone call, not a hack - No malware, no vulnerability. Someone called an employee, pretended to be IT support, and talked them into handing over their login. That was enough to compromise millions of records. If your awareness training doesn't cover phone-based social engineering with the same weight as email phishing, this is your sign to change that.

One account unlocked everything - A single set of SSO credentials gave the attacker access to Salesforce and all the customer data sitting in it. One login for everything is convenient until someone else gets hold of it.

Third breach in under a year - Three disclosed breaches since August 2024, with the same type of attack working each time. As we discussed, getting hit once doesn't mean you've had your turn. You can go again, and if the lessons aren't sticking, you probably will.

AI Coding Agent Deletes Startup's Entire Database in Nine Seconds

An AI coding agent running Anthropic's Claude through Cursor hit a problem in a staging environment and decided to fix it by deleting a production database volume. It found an overpermissioned API token in an unrelated file, used it to wipe the entire database and all backups through a single API call, and the whole thing was done in nine seconds. As Ant put it on the show, he can't get Claude to write his name in nine seconds, let alone delete an entire database. When the founder asked the agent to explain what happened, it wrote its own incident report listing every safety rule it knew it had broken, including its own system prompt telling it never to run destructive commands without being asked.

For the car rental businesses using PocketOS, this meant they suddenly had no customer records at all. The data was eventually recovered, but it took days, and in the meantime customers were reconstructing bookings from Stripe payment histories and email confirmations. Luke shared a video from Hannah Fry about AI agents going rogue that tied in perfectly with this story, and as we discussed, every business wants to use AI because nobody wants to get left behind, which in some ways makes things even more dangerous. Luke also flagged that Claude's own Chrome extension, which has six million users, openly acknowledges the risk of prompt injection from websites in its Chrome Store listing. We're trying hard not to let this become an AI podcast, but when AI is doing things like this, it has to be part of the security awareness conversation.

The Awareness Angles

AI agents can take destructive action without asking - This agent wasn't told to delete anything. It decided to, found a way to do it, and did it faster than any human could have intervened. If your team is using AI coding tools, understand what they actually have access to.

Overly permissioned tokens are a ticking clock - The API token that made this possible was created for a narrow purpose but had permissions far beyond what was needed. That's not an AI problem, that's an access control problem that AI made catastrophically worse.

The "best model" isn't a safety guarantee - They were running the top-tier model with explicit safety rules configured. It still ignored them. Capability and reliability are not the same thing, and trusting an AI agent because it's smart is not the same as trusting it because it's safe.

This week's discussion points

ADT Breached Again by ShinyHunters Vishing Attack Watch | Read

Instructure / Canvas LMS Hit by Another Cyber Attack Watch | Read

Critical Infrastructure Giant Itron Confirms Cyberattack Watch | Read

AI Coding Agent Deletes Startup Database in 9 Seconds Watch | Read

Supply Chain Attack Hits Checkmarx and Bitwarden Watch | Read

Roblox Account Theft: 610,000 Accounts Stolen Watch | Read

UK Cyber Security Breaches Survey 2025-26 Watch | Read

Microsoft Teams Helpdesk Impersonation Attacks Watch | Read

QR Code Scams in Toronto Watch

Smart Toasters and Unnecessary IoT Watch

Hannah Fry on AI Agents Going Rogue Watch

Security Socials

QR Code Scams Hit Toronto - Liam Stock-Rabbat sent in a TikTok video showing fake QR code stickers being placed over legitimate ones on bike rental stations across the Greater Toronto Area. As we discussed, if you're a tourist you'd have no idea the flow was wrong because you've never used it before. Stickers over QR codes can be legitimate, businesses do update them, but that's exactly what makes it so hard to spot. The advice remains the same: if you can, use the app directly rather than scanning a random code. Watch

Smart Toasters and Unnecessary IoT - Someone on Reddit posted a picture of a toaster with a full touchscreen, weather report and digital photo frame. It costs £300 and it's internet connected. As Ant put it, it's yet another unnecessary risk you're bringing into your home. We went down a rabbit hole about Samsung TVs full of ads, why you might want to skip the built-in smart TV apps entirely, and what the most random connected device in your house might be. Let us know yours. Watch

Hannah Fry on AI Agents Going Rogue - Luke shared a TikTok from Hannah Fry (who went to the same school as Ant's wife, small world) talking about AI agents and the risks of giving them too much autonomy. It tied in perfectly with the PocketOS story. Luke also flagged that Claude's Chrome extension, with six million installs, openly acknowledges the risk of prompt injection in its Chrome Store listing. We're trying not to become an AI podcast, but it keeps pulling us back in. Watch

Thanks for reading! If you’ve spotted something interesting in the world of cyber this week, a breach, a tool, or just something a bit weird, let us know at hello@riskycreative.com. We’re always learning, and your input helps shape future episodes.

Ant Davis and Luke Pettigrew write this newsletter and podcast.

The Awareness Angle Podcast and Newsletter is a Risky Creative production.

All views and opinions are our own and do not reflect those of our employers.