This week we've got a hack that let strangers steal your season tickets and quietly erase stadium bans at one of Europe's biggest football clubs. The AI app with a billion-dollar Disney deal that vanished in six months. Meta's finally fighting back against scammers with AI. And Apple wants to know how old you are.

All that and more on this week's The Awareness Angle.

The full episode is an hour well spent. Watch on YouTube, listen on Spotify, Apple Podcasts, or wherever you get your podcasts. Ant and Luke give you straight talking cyber news for people who actually care about the human side of security.

🎧 Listen on your favourite podcast platform - Spotify, Apple Podcasts and YouTube

Listen Now

Podcast · Risky Creative

If you work in security awareness and you've got something worth saying, this is the room to say it in.

The SANS Workforce Security & Risk Training Security Awareness and Culture Summit Call for Presentations is open right now, and the deadline is this Friday, 3rd April at 5pm ET. The summit itself runs on the 27th and 28th of August in Las Vegas at Caesars Palace, and it is the biggest gathering of security awareness, behaviour and culture professionals on the planet. 13th year running.

The summit is looking for talks, research and case studies that focus on shifting not just behaviour, but attitudes and beliefs around cybersecurity. If you've got something that's worked in your organisation, something you've learned the hard way, or a genuinely new idea worth sharing with thousands of your peers, they want to hear from it.

And if you've never presented at a conference before, this is a brilliant place to start. Mentoring is available for first time speakers, so you won't be thrown in at the deep end on your own.

If Vegas isn't on the cards, that's not a reason to miss out either. You can present remotely, so there's really no barrier to getting involved.

The deadline is the 3rd of April. Two weeks. Get your submission in.

Submit your proposal here. Get more information on the summit here.

This week's stories...

Ajax Amsterdam hack exposed fan data, allowed attackers to steal season tickets and lift stadium bans

Ajax Amsterdam didn't find out about their own security breach from their security team. They found out from journalists. A hacker had been poking around their systems, and tipped off the press before the club had any idea there was a problem.

What the hacker found was pretty significant. Every user of the Ajax app shared the same digital key. By tweaking a single request, you could act as any other user entirely. Transfer their season ticket to yourself. Change their account details. Or, and this is where it gets a bit darker, quietly remove their stadium ban. As Luke and I discussed on the episode, imagine a bunch of banned supporters suddenly finding themselves back inside the ground for one match. It's got a Channel 4 drama written all over it.

The ticket theft is frustrating. The ban removal is a safety issue. And the fact that Ajax only found out because of a journalist is a reminder that knowing something's gone wrong matters just as much as trying to stop it happening in the first place. The vulnerabilities have since been patched and the Dutch Data Protection Authority and police have been informed.

The Awareness Angle -

Ajax found out from a journalist, not their own systems - The hacker tipped off the press before Ajax even knew there was a problem. If they'd been in it for money instead of attention, hundreds of thousands of fans could have been affected before anyone noticed. Knowing something's wrong matters just as much as stopping it happening in the first place.

It wasn't a sophisticated hack, just a design flaw - Every Ajax app user shared the same digital key. Change one thing in a request and you could act as someone else entirely, transfer their ticket, change their details. No advanced tools required. Some of the worst breaches are just unlocked doors.

Lifting stadium bans is a safety issue, not just a data issue - Those bans exist for a reason. The idea that someone could have quietly removed them, with neither the club nor the banned person knowing, is the kind of consequence you won't find in any data breach notification.

Meta launches new anti-scam tools across WhatsApp, Facebook and Messenger using AI

It feels like at last. Meta has announced a batch of new anti-scam features across WhatsApp, Facebook and Messenger, and some of them are genuinely useful. On WhatsApp, there's a new warning when someone tries to get you to link your account to another device, which is a scam we've talked about on the show before. On Facebook, you'll start seeing alerts when a new friend request comes from an account that looks suspicious, with details like how recently the account was created and whether you have any mutual friends. Messenger is getting AI-powered detection that flags conversations showing signs of a scam, like out-of-nowhere job offers, and gives you the option to review it before you go any further.

Meta also says it removed 159 million scam ads in 2025. Which sounds impressive until you remember how many scam ads we all still see every week. Luke put it well on the episode: it's probably not going to scratch the surface. But it does feel like a shift. For a long time it seemed like these platforms weren't really trying. At least now they are.

The Awareness Angle -

AI being used to fight AI - Scammers use AI to make their attacks more convincing. Platforms like Meta are now fighting back with the same tools. It's an arms race, and these features show the platforms you use every day are at least trying to keep up.

The WhatsApp device linking scam is one to know about - Someone tricks you into sharing a code or scanning a QR code, and suddenly they've got full access to your WhatsApp on their device. The new warning gives you a moment to pause before that happens. If anyone ever asks you to scan or share a WhatsApp code for any reason, that's a red flag.

159 million scam ads is a staggering number - And that's just what they caught. Even with all that, some still get through. A polished ad on Facebook or Instagram is not proof that something is legitimate.

OpenAI shuts down Sora video app and Disney pulls its $1 billion investment deal

Remember Sora? It launched six months ago, hit a million downloads in under five days, and came with a billion-dollar deal for Disney to license characters like Mickey Mouse and Cinderella. Now it's gone. OpenAI has shut it down entirely, exiting the video generation business to focus on other things, reportedly as part of tidying up its product range ahead of a potential stock market listing.

Disney is walking away from the deal completely. Which is a bit ironic given that before they agreed to it, they'd been sending legal letters to Meta, Google and Character[.]AI over AI using their characters without permission. The thinking seemed to be: if you can't beat them, get in there and own a piece of it. That didn't work out.

On the episode I raised whether this might be a pause rather than a permanent shutdown. The tech still exists. And if AI tools start needing less computing power to run, which there are signs of, something like Sora could come back under a different name. In the meantime, the people who were using it will just move to other tools, many of which aren't subject to the same kind of oversight. So the AI slop problem on your social feeds probably isn't going anywhere.

The Awareness Angle -

AI tools can disappear overnight - Sora had a billion-dollar deal and a million downloads in five days. Six months later it's gone. If you've built anything around an AI tool, whether that's a workflow, a business or just a habit, it's worth remembering these things can vanish with very little notice.

Copyright and AI is still a mess - Disney was sending legal letters to Meta, Google and Character[.]AI over AI using its characters before doing the Sora deal. Now that deal's fallen apart too. The question of what AI can and can't do with other people's creative work is no closer to being answered.

AI-generated video is getting harder to spot, not easier - One of the issues with Sora was the volume of low-quality, misleading video it made easy to create. That problem doesn't go away just because Sora does. Other tools will fill the gap.

Apple rolls out age verification to UK iPhone users

Apple is rolling out age verification for UK users as part of a recent iOS update. To access certain features, you'll need to confirm you're over 18, either through payment details already on your account or by submitting ID. If you don't, or if you're under 18, web content filters will switch on automatically.

This is being driven by the UK's regulator Ofcom and the Information Commissioner's Office, who have been pushing platforms hard to keep children off certain types of content. Apple says it's a legal requirement in some regions, and this is their response.

On the episode we had a few questions about it. Where does the verification data actually go? Does it stay on the device, inside Apple's secure enclave, or does it go back to Apple's servers? We don't have a clear answer on that yet. I'm on the iOS beta and haven't been prompted yet, so we may come back to this one as it rolls out properly. What we do know is that a change this big and this unfamiliar is exactly the kind of thing scammers will try to piggyback on very quickly.

The Awareness Angle -

You're handing over more data to prove you're allowed to use your own phone - To access certain features, users will now need to submit ID or payment details. That raises fair questions about what gets stored and what happens if it's ever breached.

This is probably just the start - It's not just Apple. Regulators across the UK and beyond are pushing for age checks to become standard across apps and services. This is likely to become the norm, not the exception.

Scammers will jump on this straight away - A new, unfamiliar prompt asking people to verify their age is exactly the kind of thing that gets turned into a phishing campaign. Expect fake "your verification has expired" messages pretty quickly. If you're communicating this to colleagues or customers, show them what the real thing looks like before the fakes start circulating.

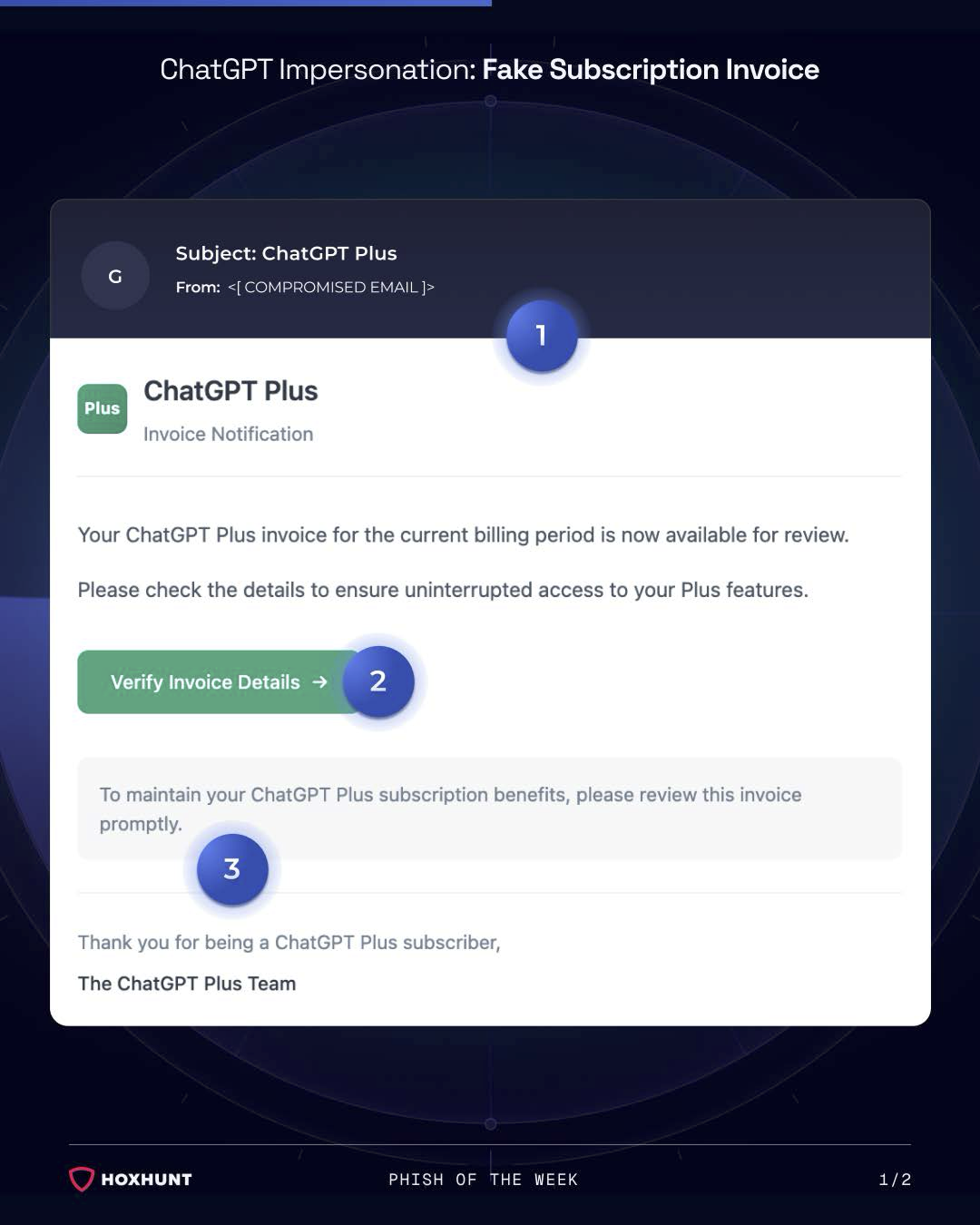

Hoxhunt Phish Of The Week

Thanks as always to the threat intelligence team at Hoxhunt for sharing this week's example.

ChatGPT impersonation - fake subscription invoice

This week's phish is impersonating ChatGPT Plus. The email mimics a subscription invoice notification using ChatGPT branding and a generic layout, claims your invoice is ready for review, and asks you to click a "Verify Invoice Details" button. The button leads to a malicious website. The message creates urgency by suggesting you'll lose access to your subscription if you don't act.

What makes this one worth flagging is that you don't even need to be a ChatGPT subscriber to fall for it. If you're not a subscriber and you get an email saying you've been charged, the instinct is to click quickly and sort it out. That's exactly what they're counting on.

Red flags to watch for:

- An unexpected invoice or subscription notification you weren't expecting

- Generic billing language with no specific details, just a button

- Urgency around losing access if you don't act immediately

- A "verify" link in the email rather than directing you to log in directly

As always, if you get a billing alert for any service, go directly to the website by typing the address yourself. Don't click the link in the email.

This Week's Discussion Points...

Ajax Amsterdam hack exposed fan data, allowed attackers to steal season tickets and lift stadium bans Watch | Read

Meta launches new anti-scam tools across WhatsApp, Facebook and Messenger using AI Watch | Read

OpenAI shuts down Sora video app and Disney pulls its $1 billion investment deal Watch | Read

How a poisoned security scanner became the key to backdooring LiteLLM Watch | Read Apple rolls out age verification to UK iPhone users Watch | Read

TikTok for Business accounts targeted in new phishing campaign Watch | Read

Lloyds app glitch let 447,000 customers see each other's transactions Watch | Read

Phish of the Week: ChatGPT impersonation - fake subscription invoice Watch

How do you deal with users who refuse to lock their laptop? Watch | Reddit

Six top tips for parents to keep children safe online Watch | Read

The Phisherman - free online safety game for kids Watch | Read

Spot a deepfake using one sentence Watch | Watch on TikTok

Real smishing campaign in France with personalised parcel photos Watch | LinkedIn

French military Strava exposure Watch | Watch on TikTok

Security Socials

Anthony's Security Social

This week I've got a few things for you.

First, I spotted a poster at my kids' school that I thought was worth sharing. It's from LGfL - SafeguardED and it's called Six Top Tips for Parents to Keep Your Children Safe Online. What I liked about it was the approach. Rather than the usual "ban everything and panic," it leads with something refreshing: don't worry about screen time, aim for screen quality. Scrolling through social media isn't the same as making a film, learning something new, or video calling grandma. There's also a nudge to check safety settings across devices, consoles and apps, to get your kids to show you what they're doing and who they're doing it with, and to talk to them about scary things in the news rather than shielding them from it. Worth sharing with parents in your organisation.

Watch | See the poster | More on SafeguardED

Second, my 11-year-old mentioned she and a friend wanted to start a games company called Barefoot Games one day, so naturally I Googled it. What I found was The Phisherman, a free online game for kids from Barefoot Computing and BT Group. It's an underwater adventure where kids earn cyber points by identifying phishing threats and learning what personal information looks like. It's gamified, it's accessible, and I'd never heard of it before. If you've got kids or you work in an organisation with parents (which is most of us), share this. It's a genuinely good tool for starting a conversation about online safety.

Third, I shared a TikTok this week of someone spotting a deepfake live on a video call using just one technique. He asked the person on the other end to hold three fingers up to the side of their face. Deepfake overlays struggle with objects interacting with the face like that and the result was pretty telling. The video has gone viral for a reason. It's a simple, memorable test that anyone can use if they're ever unsure whether the person they're talking to is real. Worth filing away.

And last, a LinkedIn post from Maxime Cartier at Hoxhunt that caught my eye this week. It shows a real smishing campaign circulating in France with a twist. It's a fake delivery notification, but instead of just a text, it includes a photo of a package with the recipient's name and full home address on the label, and a personalised link. The image makes it feel immediately real. You don't just read the message. You see your parcel. Maxime's friend assumed it was AI, but it looks more like a simple image template with text overlay. Either way, the point stands: scammers are personalising attacks with visual cues that our brains trust instantly. This is where it's going.

Luke's Security Social

This week Luke shared a TikTok showing French military personnel on what appeared to be a ship, with their Strava activity visible and their location effectively public. This isn't the first time this has happened. Back in 2018, British soldiers inadvertently revealed the location of a semi-secret military camp through their Strava data. Strava does now blur your starting point, but that only goes so far. If you're a service member or working in a sensitive environment, a fitness app with public settings on could give away far more than your split times. The broader lesson for everyone is worth repeating though: think about what your apps are sharing, with whom, and whether the default settings actually reflect what you want.